Publications

Finding our common moral values: Guidelines for value system aggregation

The research community has already produced a breadth of ap-

proaches to resolve several value alignment problems. However,

in the pursuit of value alignment, we usually need to know which

values we want our AI to align with. This problem, called value

inference, has caught some attention lately with many approaches

to detect which moral values are relevant in a context, or to build a

model (called value system) representing the values and priorities of

an individual. However, another important task in value inference

is that of value system aggregation. This consists in aggregating

the moral value models of several individuals to obtain one rep-

resenting everybody. So far, only one value system aggregation

method has been proposed. In this paper, we discuss why research

in value system aggregation is paramount and the possible avenues

to implement value system aggregation depending on the value

alignment problem at hand.

DemocrAI 2025: The 6th International Workshop on Democracy & AI —

Best Student Paper award

PDF

Reinforcement Learning Fine-tuning of Language Models is Biased Towards More Extractable Features

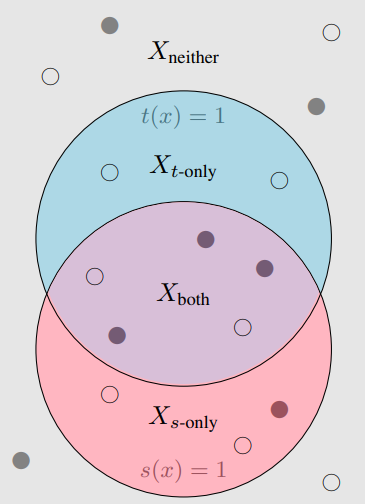

Many capable large language models (LLMs) are developed via self-supervised

pre-training followed by a reinforcement-learning fine-tuning phase, often based

on human or AI feedback. During this stage, models may be guided by their

inductive biases to rely on simpler features which may be easier to extract, at a

cost to robustness and generalisation. We investigate whether principles governing

inductive biases in the supervised fine-tuning of LLMs also apply when the finetuning process uses reinforcement learning. Following Lovering et al. [2021], we

test two hypotheses: that features more extractable after pre-training are more

likely to be utilised by the final policy, and that the evidence for/against a feature

predicts whether it will be utilised. Through controlled experiments on synthetic

and natural language tasks, we find statistically significant correlations which

constitute strong evidence for these hypotheses.

Socially Responsible Language Modelling Research (SoLaR) 2023

PDF